Where I said “common sense” I mean “reasoning” (#17 of 2024)

Today's article is almost an opinion piece. We are going to reflect on how, in the debate about artificial intelligence, we have gone from talking about “common sense” to focusing on “reasoning.” We will explore that shift and discuss how current language models are challenging traditional notions of understanding and thought.

Thank you for reading me!

Image generated by ChatGPT with the prompt: “generate an image showing a robot thinking”.

Over the last few days I have been reading two highly recommendable books on artificial intelligence: Artificial Intelligence: A Guide for Thinking Humans by Melanie Mitchell , and Artificial Intelligence: 10 Things You Should Know by Tim Rocktäschel .

They are quite different books. The first is deeper and more extensive, was published in 2020, and places a lot of emphasis on the problems AI algorithms face in reaching a understanding of the world similar to that of humans. The second is shorter, written as a series of brief essays, published recently, and presents a very optimistic view of the possibilities of today's LLMs becoming the central elements of a future AI with capabilities exceeding those of humans.

Both books are written by excellent researchers and complement each other wonderfully. The first gives us a cautious and very well-reasoned view of the difficulty of building generally intelligent algorithms, what we now call AGI, while the second shows how the advances of the last few years represent an important step that may very quickly lead us to systems with superhuman capabilities, and it explains this in a very understandable and reasoned way, unlike some others.

Common sense

One of the problems Mitchell raises in her book is the problem of common sense. It is a problem that has been present in the field of AI since its beginnings.

The problem of “common sense” in artificial intelligence, especially in natural language processing, refers to the difficulty machines have in interpreting and generating language in a way that is coherent with the implicit knowledge that humans routinely use. This type of knowledge includes the ability to understand ambiguous situations, infer hidden meanings, make assumptions about context, or even grasp implicit intentions and emotions.

In her book, Mitchell gives an example of a situation involving a person going to a restaurant. Let us look at another similar example:

“Sara waved her wand and touched the child's toy with it. The child stopped crying when he saw that it worked again. When Sara got home and told her father what had happened, he became furious and grounded her for a week.”

To understand the situation described above, one has to understand a great many things, besides having read the Harry Potter books: Sara is holding the wand in her hand, the toy was broken and that is why the child was crying, Sara saw the child crying and wanted to help, Sara did something magical with the wand, Sara felt proud for having helped the child, and so on.

Can an AI make these kinds of assumptions?

Mitchell says:

Although automatic natural language processing has advanced a great deal, I do not believe machines can fully understand human language until they have human-like common sense.

And the systems that existed in 2020, the year the book was published, did not have that competence:

Humans possess a fundamental competence that current AI systems lack: common sense.

LLMs arrive

What is the situation today? Have LLMs acquired this common sense?

In recent years, these gigantic systems have been trained on the whole internet in order to predict the next word in a text. Mitchell herself explains how LLMs work in a recent article. The simple objective of predicting the next word yields surprising results and grants these systems enormous capabilities.

The reason is that an AI learning to compress texts with the goal of predicting the next word has to learn an enormous quantity of data and concepts underlying the original texts. For example, when an LLM learns the word that follows the phrase:

“Harry was on a trip away from Hogwarts and used his magic wand. When the teachers found out, they decided to ..... (punish him)”

it is simultaneously learning a huge number of concepts: there are rules limiting the use of magic, one of those rules is that magic can only be used at Hogwarts, if a rule is broken there should be a punishment, teachers are the ones who decide about student behavior, and so on.

The famous scaling hypothesis argues that the larger the parameters of LLMs and the more diverse the input data, the greater the abstractions these systems are able to learn. As the objective function is optimized, the embeddings representing language tokens are placed in optimal positions in parameter space, and the weights of the LLM neural network are configured to represent transformation functions that reflect higher-level relationships and abstractions.

In reality, this is only a theory, and there are many unknowns about how far this technology can scale and what kinds of input data are necessary in order to achieve higher abstractions. But the level of common-sense understanding reached by current systems is surprising.

For example, let us look at what ChatGPT 4o answers when we give it the previous text, about Sara and the wand, and ask it for the reasons why the father punished her.

Me:

Read the following text and answer the question below:

“Juan waved his wand and touched the child's toy with it. The child stopped crying when he saw that it worked again. When Juan got home and told his father what had happened, he became furious and grounded him for a week.”

Question: Why did Juan's father get angry?

ChatGPT:

Juan's father probably got angry because Juan used the wand in a way his father did not approve of, which could indicate that using the wand was forbidden or dangerous.

Me:

Give me four different possible reasons that explain why Juan's father got angry.

ChatGPT:

Here are four alternative possible reasons that could explain why Juan's father got angry:

1. Juan used a forbidden ability: It is possible that using the wand is forbidden in his house or that it is a magical ability Juan should not have used outside certain contexts.

2. The father fears the consequences: The father might be worried that using the wand in public could attract unwanted attention or dangers for the family, as if it were something secret or dangerous.

3. Juan broke a family rule: Perhaps there is a strict family rule about when and how to use magic or special tools, and Juan disobeyed it by fixing the child's toy without permission.

4. The father fears for Juan's safety: The father may have become angry because, although Juan's gesture was well intentioned, using the wand could endanger Juan himself, as if there were some risk associated with its use.

It could be improved, explanations 1 and 3 are somewhat redundant, but it really does feel as if the system “understands” concepts such as prohibition, rules, safety, or risk perfectly well.

A huge amount of progress has been made in just the last four years.

Now the term in the debate is “reasoning”

Despite those advances, many researchers in AI, philosophy, or neuroscience deny that what LLMs do can be called “understanding.” Mitchell explains this position very well in her 2023 article, “The Debate Over Understanding in AI's Large Language Models” , where she presents in great detail the arguments of those who are in favor and those who are against.

And she ends up accepting that LLMs have learned to handle, some form of, common sense:

It could be argued that, over the past few years, the AI field has created machines with new modes of understanding. Problems that require large amounts of knowledge will continue to favor large-scale statistical models like LLMs.

But she adds an important detail, the new major criticism: what they cannot do is reason and plan. That remains limited to human intelligence:

But those problems for which we have limited knowledge and strong causal mechanisms will favor human intelligence.

When Mitchell speaks about “strong causal mechanisms” and “limited knowledge,” she is referring to our capacity for planning and reasoning. For example, when planning a trip using the web, a person can find flights and hotels, but must also consider factors such as the arrival time and the availability of transportation. If they arrive late at night and there is no public transport, they will look for a hotel near the airport.

This sort of causal reasoning, adjusting the plan in response to unpredictable conditions and carrying out several steps of inference, is, for many authors, not something that can be achieved by today's autoregressive LLMs.

Mitchell herself insists on this point in several recent posts:

-

Can Large Language Models Reason? (Sep, 2023)

-

The LLM Reasoning Debate Heats Up (Oct, 2024)

The debate has intensified with the release of o1, a model that, according to OpenAI, was built precisely to reason. For example, people on X have been talking a lot in recent days about a paper by Apple researchers in which they fool different LLMs by adding irrelevant data to elementary-school problem statements. I did some quick experiments, and I had the impression that o1 does not suffer from this problem, but it will need more investigation.

LLMs still have a lot of runway

The revolution produced by applying deep learning to language-processing problems raises a big question about the future. How far can this technology be scaled? Will we be able to build with it intelligent agents capable of interacting with our data and with the web and helping us with relevant tasks? Will it be possible to build agents to which we can assign tasks that keep them busy for hours or days, tasks in which they have to gather information step by step, perform experiments, and obtain results?

It is still too early to know. The growth in LLM capabilities has so far been exponential, but we do not know whether this trend will continue or whether we are reaching an inflection point where growth could level off, following a logistic curve, an S-shaped curve that flattens as it reaches a limit.

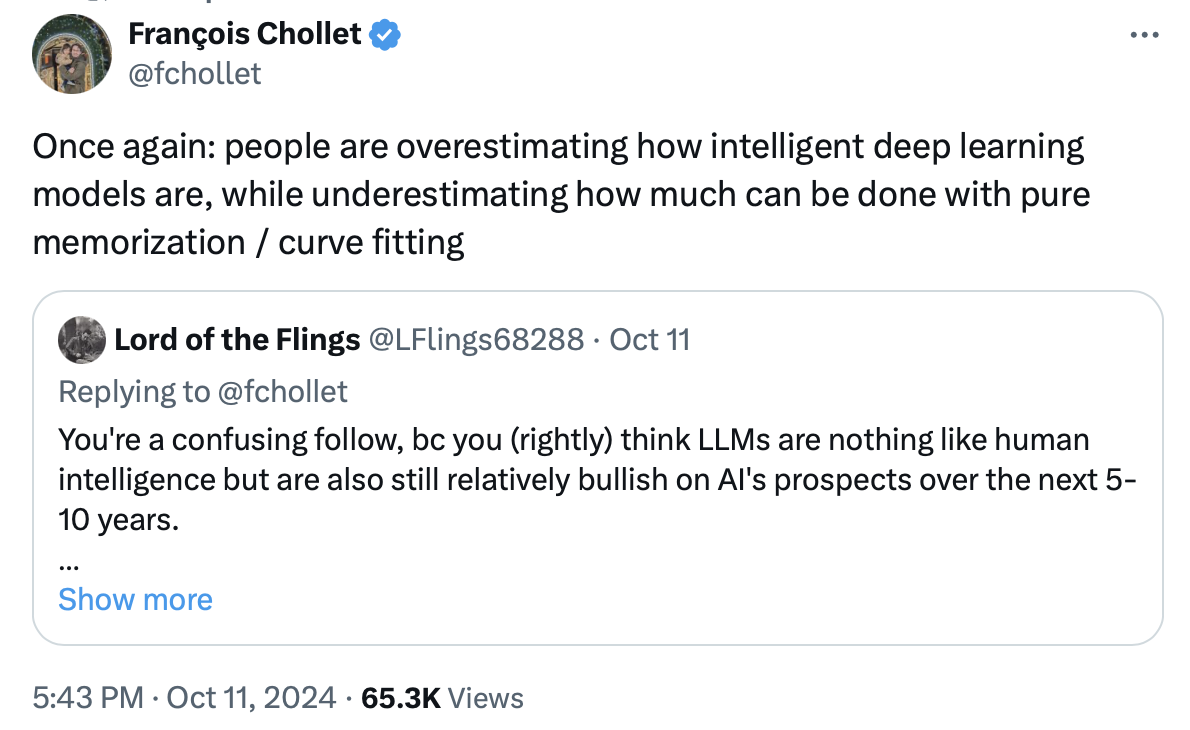

It may also be that what is needed is to combine LLMs or refine the training data. OpenAI, by building o1 on a somewhat different paradigm, though it is still an LLM, shows that it is possible to build new systems based on the current ones. Researchers such as the previously mentioned Tim Rocktäschel argue that the abilities current LLMs have to generate alternatives and validate them may be the basis of systems capable of improving themselves. François Chollet himself, whom I have mentioned more than once in this newsletter, says that LLMs, with their method based on memorizing patterns, may still be able to achieve many more things.

Do not work on LLMs

Other researchers are much more critical of the current technology. For example, Yann LeCun argues that current tokens need to be expanded with elements that combine text, video, actions, and other sensory data drawn from the real world. He proposes an architecture also based on learning embeddings, but in a radically different way from current LLMs, called JEPA, Joint Embedding Predictive Architecture.

In that talk, he even recommends to young researchers that they not devote themselves to LLMs, if what they want is to discover relevant things rather than just make money, and he makes the following prediction: in the next 2 to 3 years, the efforts of the current giant data centers will not produce results, and people will stop talking about “scaling.” LLMs will be one element of the solution, but not the fundamental one.

But let us not misunderstand LeCun's position. He is not on the side of those who think computers will never be able to think like humans, quite the opposite. He argues that AGI is possible, although with a technology different from the current one. In the talk above he even mentions a time frame of a decade, I suppose to give his boss, Zuck, an answer.

Critics and apocalyptic thinkers

Set against that position, the most critical current within AI denies even that we are seeing advances toward human intelligence. They seem to apply the Tesler theorem, or the AI effect:

Intelligence is whatever machines still cannot do.

According to them, intelligence is complex, multifunctional, and deeply related to other intrinsically human elements such as thought, cognition, emotions, and consciousness.

Despite a great deal of evidence, they keep denying that these models have achieved even a small degree of understanding. It feels as if they are afraid that a machine might one day become intelligent.

The anecdote Mitchell recounts at the beginning of her book is very revealing. Douglas Hofstadter, the famous AI researcher and author of the celebrated book Gödel, Escher, Bach: an Eternal Golden Braid, ended up saying at a meeting at Google in 2014:

I am terrified. It seems terrifying to me, very troubling, very sad. They will replace us. We will be relics, left by the wayside.

Mitchell then explains that terror:

Hofstadter's terror was that intelligence, creativity, emotions, and even consciousness itself might be too easy to create, that the aspects of humanity he valued most might end up being merely a “bag of tricks,” that a shallow set of brute-force algorithms might explain the human spirit.

I think versions of that idea are what lead these critical researchers to relativize any progress that occurs. Paradoxically, I think it is also these same ideas that alarm the “apocalyptic” camp represented by people such as Geoffrey Hinton. Deep down they fear that our humanity might be nothing more than a brute-force algorithm learned and captured in billions of parameters.

A different kind of intelligence

When I started writing this article, I did not want to make it too long. But as always happens, one thing led to another, and in the end I opened up a topic I wanted to leave for another day: consciousness, or more specifically, sentience.

I will leave the full argument for another article, but I think the way to escape this terror is to consider that what separates us from LLMs is exactly the same thing that relates us to many other living beings: the possibility of experiencing sensations, pain, pleasure, fear, or joy. Current algorithms, and in my opinion any future algorithm as well, are not capable of feeling.

This frees us from a great many ethical problems that we do have with our relatives, mammals, vertebrates, and even more complex invertebrates. Unlike algorithms, these beings possess the capacity to feel pain, pleasure, and other emotional states, which obliges us to consider their well-being and their rights in our ethical decisions.

The lack of sentience in machines allows us to think of them as mere non-sentient “thinking machines” and to accept a view in which “AGI” is not equivalent to “human.”

I will close with the quote from Mitchell that also concludes her article on the debate over understanding:

The challenge for the future is to develop new scientific methods that can reveal the detailed mechanisms of understanding in different forms of intelligence, discern their strengths and limitations, and learn to integrate those truly diverse modes of cognition.

Until the next fortnight, see you then! 👋👋