AGI or not AGI? (#14 of 2024)

After a summer break, this week I am bringing you another special article in which, instead of reviewing what happened over the last fortnight, I comment on a single topic. But do not worry, this one will be quite a bit shorter than the one I did at the end of May on the Herculaneum papyri 😄.

Next week we will return to our usual fortnightly programming, with an issue in which I will comment on some of the summer's news and yesterday's surprise: OpenAI's new model.

Thank you for reading me. And a hug as well to the newly arrived subscribers.

Image generated by Grok. Prompt: “A computer scientist angrily arguing with a colleague over a blackboard about the definition of AGI”.

Lately the term AGI, Artificial General Intelligence, is on almost everyone's lips. Podcasts, blogs, social networks, newsletters, everyone talks about whether we are going to reach AGI in X years or not.

Before I risk making any prediction, I want to spend a little time talking about the term itself. Does it still make sense to talk about AGI? Or has it become a cursed term, one best avoided, ever since people like Altman and OpenAI started using it nonstop? Will people look at you badly if you talk about AGI?

Let us start with an anecdote from last week.

Years ago I used to follow Grady Booch on Twitter. He was an important figure in software engineering in the 1980s, when he popularized some very interesting object-oriented design methodologies. I still have a couple of his books from that period.

When the first generative models started to become public, Booch also started talking about AI. At first it was interesting. He highlighted the limitations and problems of these models, and his voice was a useful counterweight to exaggerated doomers such as Sam Harris or Nick Bostrom. But his timeline soon turned into the same kind of repetitive grumbling you get from Gary Marcus: everything negative, everything problematic. One day, I do not remember which post it was, I got annoyed, went into Van Gaal mode, and unfollowed him.

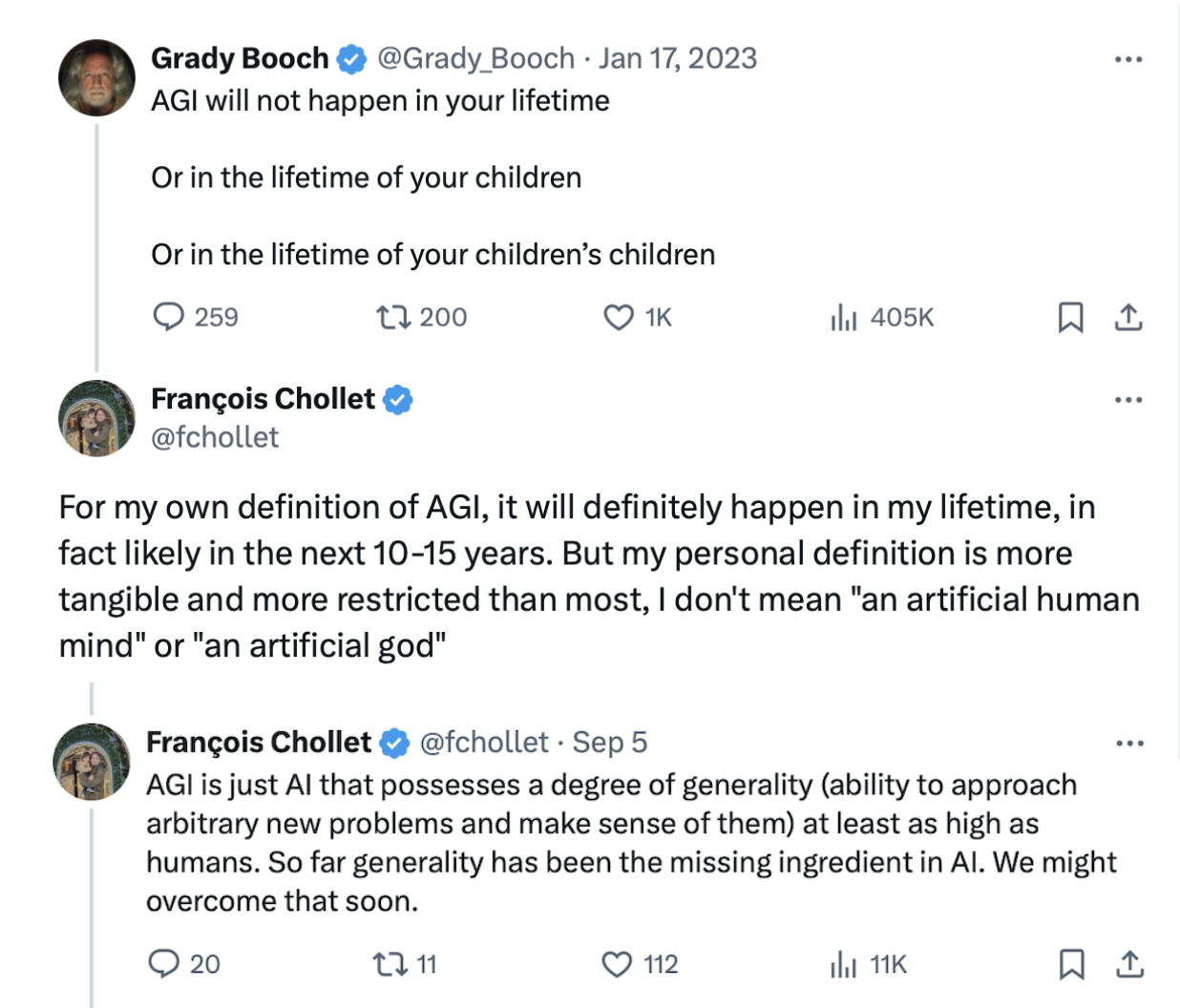

But recently the X algorithm showed me the following interaction between François Chollet and him:

Grady Booch:

AGI will not happen in your lifetime. Nor in your children's lifetime. Nor in your children's children's lifetime.

Booch's post was more than a year old, but for some reason Chollet saw it a few days ago. François is a nice guy, truly, go watch some of his videos on YouTube, and instead of doing what I did and unfollowing Booch, he replied politely:

In my own definition of AGI, it will definitely happen in my lifetime, in fact probably within the next 10-15 years. But my personal definition is more concrete and more restrictive than most, for me it is not “an artificial human mind” or “an artificial god.” AGI is simply an AI that possesses a degree of generality, the ability to deal with new problems and understand them, at least as high as that of humans. So far generality has been the missing ingredient in AI. We may soon manage to develop it.

Booch answered with a joke about the word “generality”:

In general :-) I agree with you, except that, generally speaking, those measures of generality are so vague that they make the bar for success quite low.

It is clear that Booch does not know all the work Chollet is doing with his ARC Prize, arcprize.org and X, precisely to try to measure, in an objective way, some of that “generality” needed for AGI. We already talked about this prize in the post for the first fortnight of June.

Chollet did not reply again. What I do not know is whether, like me, he also stopped following him.

The above is not just an anecdote. The lack of understanding around the term AGI is becoming more and more intense. And things are now getting even more complicated because of its increasing use outside the scientific sphere. Startup executives, would-be influencers on X or YouTube, many of them use the term mainly to attract attention and capture an audience, or money.

But the popularity of the term also has its good side. More general-interest programs are using it to explain interesting things while doing good scientific outreach. For example, The Economist, in its always-interesting weekly podcast Babbage, has published a special on AGI, AGI, part one: what is artificial general intelligence?. The program tries to provide a fairly academic perspective by interviewing different kinds of people, including engineers, computer scientists, and neuroscientists.

Melanie Mitchell, an AI scientist deeply familiar with traditional AI but also with LLMs, see for example her article Large Language Models in The Open Encyclopedia of Cognitive Science, comments on a definition tied to human capabilities:

AGI has been defined as a machine that is able to do everything a human being can do. And then, more recently, that definition has been weakened a bit, by defining it as a machine that can perform all the cognitive tasks a human being can do, leaving aside physical forms of intelligence.

But then she also emphasizes that she does not like the term AGI very much:

Host: Do you think the use of the phrase AGI is really useful for AI scientists like you, or do you see it more as a distraction?

Mitchell: I think it is a bit of a distraction. People feel they can take intelligence as something separate from its manifestation in humans, in the human brain and body, and isolate it [...]. And I am not convinced that this is really meaningful or that it gives us a clear direction to follow.

However, Google scientist Blaise Agüera y Arcas does not get bogged down in terminological debates and says that the problem is not one of making models more general, but of making them better in different respects:

I think it is really just about getting better at a bunch of things we all care about, like truthfulness, reasoning, memory, planning, having a consistent perspective over long periods of time, and so on [...] So I do not think it is about how far away we are from any one particular thing, but rather how quickly these things are improving, and when they will become reliable enough to do a variety of different things that, at the moment, I would say they are not reliable enough to do autonomously.

So there is no agreement even among the scientists most deeply involved in the subject. Some say AGI is not a useful term, others say it is, because precisely what is needed is that generalization. And others say we are almost already there, and that what remains is just improvement.

So what is my opinion? AGI or no AGI?

For me, as Chollet says, the key lies in the “G” of the term: “general.” This “G” symbolizes a meaningful shift in the evolution of artificial intelligence and neural networks, from specialized models, like those that dominated the 2010s, toward more generic and versatile models such as today's LLMs, which are capable of storing all human knowledge and interacting in natural language.

And, as Agüera y Arcas says, we will move closer to that “G” as new algorithms are developed that improve the shortcomings of current LLMs by giving them new capabilities, capabilities that allow them to solve problems such as Chollet's ARC Prize.

As for me, I will keep talking about AGI, even if every time I do so I have to reference this article so that people do not confuse me with an AI Bro.

Image generated by ChatGPT 4o. Prompt: “Make an image of an AI Bro”.

Until the next fortnight, see you then! 👋👋