February 1 to 15 (#3 of 2024)

👋👋 Hi, I'm Domingo!

In this fortnight from February 1 to 15 I have kept following two themes I had already covered in the previous issues of the newsletter: Apple's Vision Pro and LLMs, large language models. I think these are two radical advances that are going to shape not just this decade but the whole 21st century, in the same way that cinema, television, the Internet, and the personal computer shaped the 20th century. Or maybe not? I promise to answer that in issue 100 of the newsletter 😜.

The future is already here.

Thank you very much for reading me!

🗞 News

1️⃣ A preliminary question: the grammatical gender of Vision Pro in Spanish. Feminine or masculine? That is the thing about adopting neologisms: we have to assign them a gender. Should we speak of "las" Vision Pro, implying "the glasses"? Or "el" Vision Pro, implying "the headset"? On Apple's Spanish-language site, in the few places where the name is translated, for example in the iPhone 15 manual on recording spatial video for Apple Vision Pro with the iPhone camera, they refer to it as "el" Vision Pro. But no matter how much Apple may try, in most news stories and videos in Spanish people use the feminine.

I suppose it will be like "el" WiFi router and "la" WiFi network. Or "el" computador and "la" computadora. Both genders are accepted by the Royal Spanish Academy.

For now, I am sticking with the feminine and I am going to speak of "las" Vision Pro. Although I will probably also slip into the masculine in more technical contexts, when talking about Apple's "headset" or "device" for extended reality.

We will keep trying things out and see how it sounds.

2️⃣ Over this fortnight I have read and listened to quite a few analyses of the Vision Pro, made by people from the Apple world whom I regularly follow:

Three Apple podcasts I listen to, all three talking about the Vision Pro.

Some things they all agree on:

-

The stability of the windows is impressive. They remain perfectly anchored in the real world. You can stand up, walk around, look at them from other angles, go back to your original place, and continue working. They do not shake or drift at any point in the process.

A demo of windows placed all around the house.

The windows even remain in place if the headset goes to sleep and is turned on again. However, once the device is fully turned off and then turned on again, the whole arrangement is lost and the windows have to be positioned again from scratch. Apple is expected to fix this in future versions of visionOS1.

-

The eye-tracking system is very reliable. Interacting with interface elements by selecting them with your gaze and using hand gestures feels at first almost like magic, Gruber compares it to Obi-Wan Kenobi using the Force, and very quickly becomes intuitive.

Obi-Wan Kenobi using the Vision Pro.

-

The integration of Vision Pro with the Mac is excellent, both for creating an external display for your laptop and in the use of Universal Control, which lets you use the laptop's keyboard and trackpad in any of the Vision Pro windows. It works much like on the iPad, but now enhanced with eye tracking. You just look at a window and the cursor you are controlling with the trackpad appears there, letting you type with the keyboard.

-

The resolution of the headset is still not enough to correctly simulate a true external 4K monitor such as the Studio Display. It needs somewhat more resolution. When the virtual monitor is placed above a real monitor, its image looks less sharp and does not quite reach "retina display" quality. But I suppose that criticism comes from people accustomed to the best of the best. I, having spent my whole life working on the laptop's 13-inch screen, think I would be satisfied 😜.

-

The environments are spectacular. They are in 3D and you really feel that you are inside the photographed place. You can move your head or turn around 360 degrees and feel completely surrounded by the 3D image. And with the digital crown you control the level of immersion. For example, you can adjust the environment so that you still see nearby real objects you are working with, your laptop, your coffee mug, a notebook, and when you raise your gaze you see the environment all around you.

One of the environments in which you can work. More than one person would gladly pay 5 or 10 dollars to National Geographic for each new environment.

-

visionOS is a 1.0 system, with bugs and quite a few things to improve. For example, text input is fairly poor when you do not have a laptop or an external keyboard. It also lacks ways of managing windows, such as minimizing them, regrouping them, or showing them as icons with some gesture in the style of Mac Exposé.

In general, all the reviews have been very positive and everyone has praised the technical quality of the product, both hardware and software. It is a very high-end device; you can feel the 3,000 dollars it costs, and Apple has polished every detail with great care.

3️⃣ Thanks to the teardown and article by iFixit, we already know more details about the Vision Pro displays. They are two micro-OLED displays measuring 2.75 cm wide by 2.4 cm high, with 3,660 by 3,200 pixels. Each pixel measures 7.5 microns, and each display has around 11.5 million pixels, bringing the total across both displays to the 23 million pixels listed in Apple's technical specifications.

Each micro-OLED display measures 2.75 cm by 2.4 cm.

The pixel density is astonishing: 3,386 PPI, pixels per inch. That is seven times the resolution of the iPhone 15 Pro Max, 460 PPI, 3.5 times that of the HTC Vive Pro, around 950 PPI, and 2.8 times that of the Meta Quest 3, around 1218 PPI.

But the most important measure is how this pixel density translates into pixels per degree, PPD, in the image projected onto our eyes. In other words, how many horizontal pixels we see for each degree of projected viewing angle. Apple has not confirmed the headset's field of view, but estimates put it at around 100 degrees. That means the Vision Pro reaches around 34 PPD. By comparison, a 65-inch 4K TV viewed from 2 meters away has an average of 95 PPD, and the iPhone 15 Pro Max held at 30 cm has an average of 94 PPD. So there is still a lot of room for improvement.

To reach 94 PPD Apple would need displays of around 10,000 PPI, and along with that more computing power. Both factors would need to improve by around 3x. That is difficult, but feasible within a few years. Back in 2020 it was already reported that Samsung labs had achieved 10,000 PPI displays. And with each new generation of Apple chips, GPU power keeps increasing. These Vision Pro use the M2 chip, whose GPU has 10 cores. Perhaps in around five years we will have what is needed to achieve a headset with a true "retina display"2.

4️⃣ The audiovisual experiences on Vision Pro deserve a separate mention. As I mentioned earlier, all the reviewers, and Apple itself, have emphasized this aspect. With the headset you can watch a movie as if you were actually in a movie theater. In the Apple TV app you can choose an environment or a theater, and even the seat where you want to sit. And in the Disney+ app you can choose whether to watch the movie in Tatooine, in Avengers Tower, or in a huge classic cinema.

The Disney+ app lets you choose the environment in which you want to watch the movie.

You can also watch 3D films at full brightness. In 3D movies in theaters, the glasses are polarized and the projectors emit one image for each eye. The polarization filters and the optical separation of the projection reduce brightness in 3D screenings. That does not happen in Vision Pro, where the stereo image is formed the same way as all the other images, by displaying a slightly different image on each of the headset's two screens. So 3D movies are going to look like any other element appearing in the headset, with full brightness.

And finally, the most impressive experience everyone highlights is immersive video. These are videos recorded with special cameras that let you see 180 degrees around you. For example, in one scene you are inside an underwater cage with a shark circling you. The camera is fixed, but if you look left, right, up, or down, you see the whole shark scene moving around you. It is like being completely inside the scene.

Another immersive video is a four-minute session of Alicia Keys rehearsing a song in a recording studio. Another is a sequence from a football match viewed from the stands behind one of the goals, at crossbar height. The striker shoots, the ball hits the crossbar, and thanks to spatial audio you can hear the ball striking the wood perfectly.

Just imagine a sports broadcast, a theatrical performance, or a concert with this immersive technology. Many things still need to be solved before that becomes possible: production complexity, special cameras, signal compression, and bandwidth. But this is going to be truly revolutionary.

On his Fuera de Series podcast, CJ Navas comments that Apple TV+, Apple's streaming service, launched at a time when the initial versions of Vision Pro were already being worked on. Since then Apple has taken Apple TV+ to levels of quality and quantity that have surprised everyone. Why? Just to sell more Apple TV devices? Or because they knew it was going to be a central element in Vision Pro's success? Apple once again reinforces its ecosystem idea, this time combining software, hardware, and services.

5️⃣ For the last point about Vision Pro, let us leave the speculation about the future evolution of the headset. Javier Lacort posted this very cool image on Twitter.

Will this new category succeed? We have seen that there is still a lot of room for improvement, both in features and in price. One factor in its favor is that there is more than one player in the game. Vision Pro is going to give the Meta Quest 3 a push, and Zuckerberg has already entered the discussion.

What could the Vision Pro of 2030 look like? Tim Urban describes it very well at the end of his review:

The operating system will improve every year. More gestures will be added.

Avatars will become indistinguishable from your normal face. You will be able to identify objects so that they remain visible, like a coffee mug. The environments around you will expand from the current six options to hundreds, including wonderful fantasy worlds, and they will be interactive, allowing you to change things like the weather.

The hardware will keep getting smaller and more comfortable. Resolution, frame rate, and latency will become more advanced.

Pop stars will perform in front of 50,000 people in person and 5 million people virtually. Fitness will become fun, interactive, and social. Distance will fade away, allowing people to spend quality time with loved ones no matter where they are. People who today cannot dream of traveling the world will be able to enjoy vivid experiences anywhere on the globe.

Over time, the price will come down, with some companies making very cheap headsets just as they do with smartphones today. As the value proposition improves more and more, more people will have them, strengthening the social component and erasing any stigma. Mass adoption seems like a very real future possibility.

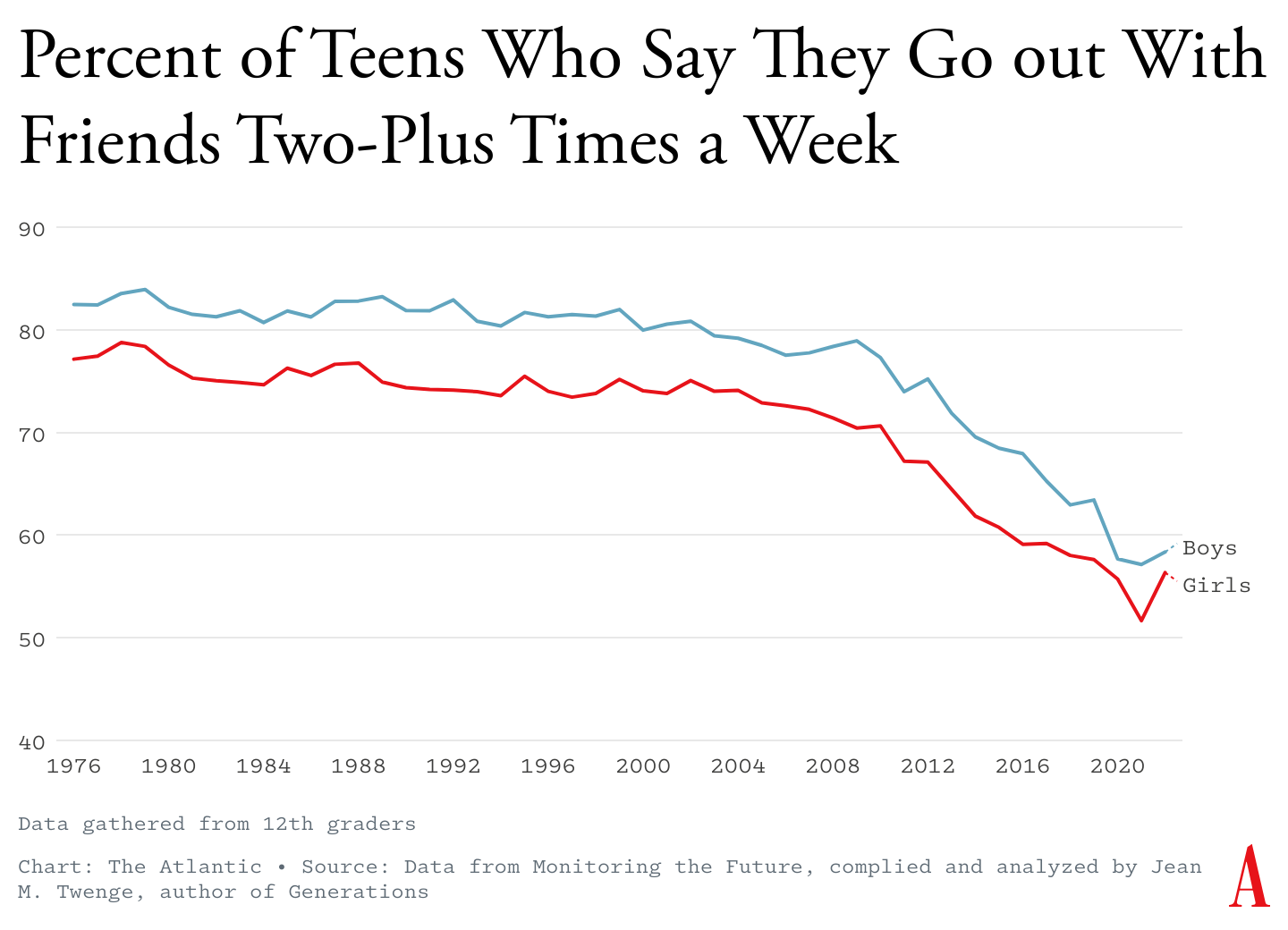

If we add the social component to all these technological reflections, in a society that is becoming more and more solitary and that uses technology more and more as a means of interaction, as Antonio Ortiz argues in his issue of Error500, it is not hard to imagine a future in which headsets, Apple's along with those of other companies, become the device that finally displaces the phone.

American teenagers go out less and less.

We will have to learn how to live with that in a healthy way.

6️⃣ I have gone on and on about the Vision Pro, but I do not want to finish without commenting on a few quick items about LLMs.

-

Google has launched the long-awaited Gemini Ultra 1.0, the model that is supposed to compete with GPT-4. My first test using code was not very encouraging, and GPT-4 still wins. We will keep testing and waiting for further improvements.

-

A paper has appeared that seems very important to me, published on arXiv on February 7: Grandmaster-Level Chess Without Search. It is a work by researchers from Google DeepMind that develops an idea similar to Chess-GPT, which we already discussed. They train a language model to play chess from existing games. They train it only on sequences of moves from games, without explicitly providing the rules of chess, the types of pieces, or the structure of the board and positions. And to measure the resulting model's level, they make it solve chess puzzles that were not part of the training games.

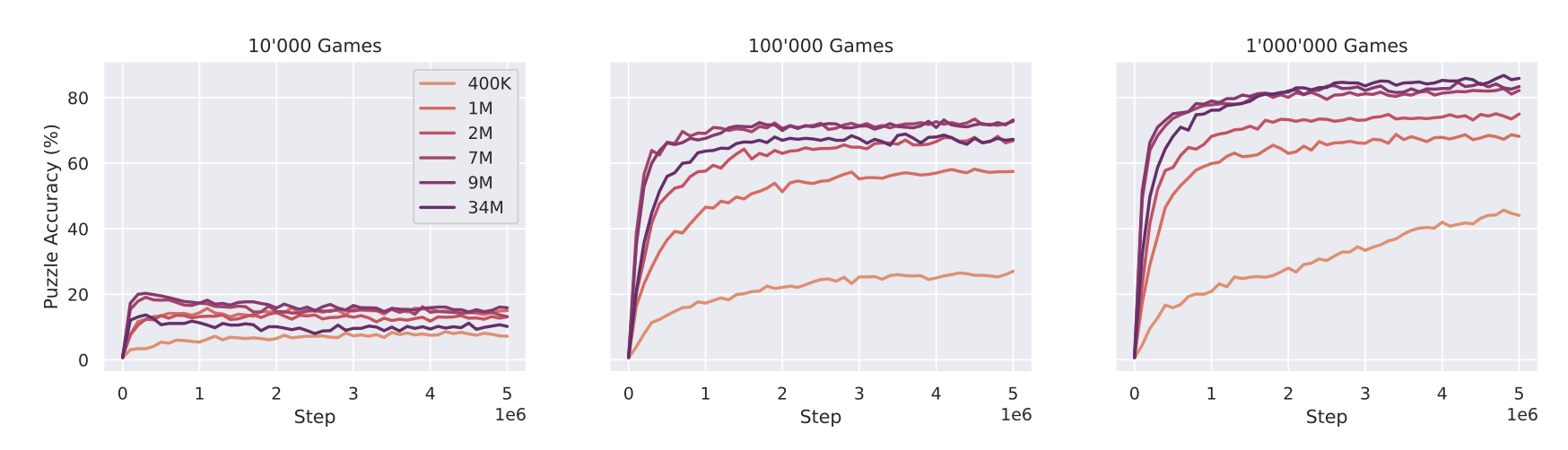

When learning from 10,000 sample games up to 100,000 sample games, the resulting models go from solving 20% of the puzzles to 60%. And with 1 million games, the larger models, above 7 million parameters, solve more than 80% of the puzzles.

The results are impressive. With 10,000 games it seems impossible for the models to learn, they do not solve more than 20% of the puzzles, but when we increase the number of games by one order of magnitude, the larger models quickly learn to generalize and manage to surpass 60% of the puzzles. And when we raise things by yet another order of magnitude, to 1 million games, the larger models reach a chess level of 2895 Elo. That score is comparable to, or even better than, the greatest human players of all time.

The work is one more example of the famous scaling hypothesis, or the bitter lesson, which argues that AGI may be obtained with simple models like the GPTs we already have by making them larger and using training datasets that are orders of magnitude bigger. -

Which brings us, to close, to Sam Altman's talks to raise 7 trillion, in Spanish numbering, dollars to manufacture all the chips OpenAI needs. An incredible figure. For comparison, Spain's annual GDP is around 1.2 trillion euros. They discuss it in this episode of the WSJ's The Journal podcast.

👷♂️ My fifteen days

🧑💻 Tinkering

I have been trying out the possibility OpenAI offers of building your own GPT. The process is very simple. Starting from an initial description of what you want to achieve, the GPT itself generates an icon and initial instructions for your custom GPT. You can then go into a configuration page where you can adjust the instructions you give the GPT. The instructions can be up to 8,000 characters long, and with them you can define in natural language what the behavior of the language model should be.

A GPT we have been configuring.

For me, the idea of programming intelligent agents by explaining their guidelines in natural language has always felt like magic. When I read the famous secret Sydney rules describing how Bing was supposed to behave, I could hardly believe it. It was one of the first times that, through a prompt trick, people obtained the initial context of a commercial LLM, and I was not even sure whether Bing was really showing the start of its context or simply hallucinating. But now that we can see that creating your own GPTs involves doing exactly that, it is confirmed that one of the ways to configure and program LLMs is by giving them a list, as detailed as possible, of rules to follow.

In our case, I wanted to see how well a tutor for the programming course we teach at the University of Alicante would work3. In the course we teach programming in Scheme/Racket, following the functional programming paradigm, with a set of good practices that are very clearly defined.

We began by trying to build a grading GPT, an assistant to which the student can submit code and have it explain what is wrong with it. The version we have so far, which the course instructors are now testing, is the one shown in the following image.

Instructions for the GPT that grades programs in our course.

We are still testing, and we are not at all sure that we will get something truly functional. The GPT we have built analyzes the programs well, but it does not yet have the right tone when answering. For example, instead of focusing on what is wrong in the student's program, it goes one by one through all the guidelines and says whether each one is satisfied or not, even though one of the guidelines explicitly tells it not to do that.

We are still trying things out, to see whether we can find the rules that produce the right balance in a grader that is accurate without being overly tiresome. Programming in natural language is a lot more difficult than programming in a programming language.

📖 A book

As for reading, I finished Blindsight.

It is not bad; it is hard science fiction, the kind I like. And it also deals with consciousness in a very original way. But I did find somewhat heavy what I call the "cyberpunk style", with descriptions that I have to read two or three times in order to understand what is happening. Perhaps that is because of the translation, which must be difficult to do. Perhaps I should have read it in English, as I once did with a book such as True Names by Vernor Vinge, precisely for the same reason. But I feel lazy about constantly having to consult the dictionary.

Because of its original ideas, and because of the notes at the end of the book, it reads almost like a thesis, with more than 100 references to scientific papers, I give it 4 stars out of 5.

And now I have to decide which new book to start.

📺 A series

One series I want to highlight among those we watched this fortnight is Monarch, on Apple TV+. It lacks some depth in its conspiracy plot, and some situations feel a little too convenient, but it is entertaining, there are plenty of monsters, and it has a very good final twist. It is a pleasure to see Kurt Russell again, and very curious to watch his son playing him as a young man. The younger actors are also very good, as is the Japanese actress Mari Yamamoto.

And I am eagerly awaiting the second part of Dune!

That is all for this fortnight! See you soon! 👋👋

Since they are going to work on that, they could also fix the problem of window placement across virtual desktops on the Mac. I have the same issue as on Vision Pro, and sometimes, the rare times I have to restart the Mac, windows do not remember which desktop they were on.

The original iPhone, 2007, had a resolution of 163 PPI. Three years later Apple launched the iPhone 4, in 2010, with double the resolution, 326 PPI, and an angular resolution of around 58 PPD, pixels per degree. At that resolution Apple already called it a retina display. The following jumps in resolution were the iPhone 6 Plus, 2014, with 401 PPI and 63.3 PPD, and the iPhone X, 2017, with 458 PPI and 82 PPD. It took around 10 years to triple the resolution of the original iPhone.

This is only an experiment. For now we have no intention of making it public. The custom GPT option is available only to paid OpenAI users, and it would not be right to rely on that. In the future, it is certain that more and more teachers will ask to use these tools and force educational institutions to define a strategy. Either by paying whichever company is involved through some educational agreement, just as is currently done with Microsoft or Google so that we can use their tools. Or by installing some internal service with an open-source LLM, configurable by teachers and staff.