January 1 to 15 (#1 of 2024)

👋👋 Hi, I'm Domingo!

I'm going to start 2024 with an experiment: a newsletter that reviews things from the last fifteen days that I have found interesting and that I think are worth highlighting and collecting.

It is going to be a personal newsletter, with my opinions and comments. Rather than being a sterile list of news items, it will be a kind of "fortnightly review" with things I have seen on X or on Substack, come across in the RSS feeds of some blog, or heard on some podcast. And at the end I will mention some little project I may be working on, some series we may be watching, or some book I may be reading.

At bottom, it is nothing more than an excuse to try to write with some regularity and to pin down a few ideas amid the dizzying flow of information in which we move. And also to provide some up-to-date information to those of you on the other side, whether you are people or LLMs 😜.

Here we go, and thanks for reading me!! 😄🙏

🗞 News

1️⃣ The year has started with movement in the field of intelligent robotics. Google DeepMind has published advances in intelligent algorithms for controlling manipulators. In the post they comment on the advantages of using transformers and language models to guide the behavior of robotic arms and hands.

A company that is still mostly unknown, Figure, has published a video of its humanoid robot placing coffee capsules. They do not explain much about the techniques they use, but they say it was trained in only 10 hours, just by watching humans perform those same actions. We will have to wait for them to publish a paper or some technical report. For now it is just a demo, I suppose to raise the startup's valuation. That said, the humanoid is very cool.

And John Carmack replied on X that we are on the right track, but that the really good stuff will take a bit longer to arrive, in the 2030s.

2️⃣ Nicklaus Wirth has died, the Swiss computer scientist who developed Pascal and many other programming languages.

Pascal, and his book "Algorithms + Data Structures = Programs," was the programming language with which those of us who entered university to study Computer Science in Alicante in the mid-1980s learned to program.

If I remember correctly, in the degree we first saw Pascal and then C. It is a good approach for learning to program: first a high-level language to understand the basic algorithmic concepts, and then, after that or in parallel, a low-level language to get closer to the machine on which programs are executed.

Kent Beck's post about his encounters with Wirth is great. And so is Martin Odersky's.

3️⃣ The echoes of the great copyright debate and of the New York Times lawsuit against OpenAI are still reverberating.

LeCun has been told all kinds of things for arguing that it would be very good for society if the vast majority of authors, who earn almost nothing from their books, published their work openly. Many of us have spent our whole lives doing exactly this. And in software, this idea lies at the origin of the open-source movement that was born in the 1980s. But people from the humanities do not like this kind of experiment. I remember years ago, when I took part in some committees at the University of Alicante where people were starting to talk about making lecture notes openly available, the ones who were most taken aback by the idea, to put it mildly, were the professors in Economics and Law.

As for the lawsuit itself, I join Andrew Ng and those on X who say that the New York Times must have done a great deal of prompt engineering in order to get its article excerpts out verbatim. It also seems that they did not include the prompts in the lawsuit, only the results. I suppose that will be one of OpenAI's arguments. Another will be that the articles were syndicated in openly accessible outlets and that the model got them from there.

Now that the doomers have calmed down, this is one of the issues with the most medium-term runway.

4️⃣ The posts on X by the young researcher Adam Karvonen are very interesting, especially the ones in which he presents Chess-GPT: a 50M-parameter model capable of playing chess. The model is trained on 5 million chess games represented as character sequences using the standard chess notation, 1.e4 e5 2.Nf3 and so on. It is never given either the state of the board or the rules of chess explicitly. In the style of LLMs, it simply has to learn to predict the next character.

Surprisingly, after a day of training on 4 RTX 3090 GPUs, the model learns to play chess at an Elo 1300 level. That is the level of a club player, with a good understanding of the game and able to take part in local tournaments. It is a level that indicates the model is competent and has a basic to solid understanding of the game, capable of producing decent moves and strategies.

That is unexpected for a language model. It is surprising that, simply from the character sequences representing the games, the model has learned concepts such as check, checkmate, castling, promotion, and so on.

This research adds another piece in support of the idea that LLMs can develop a representation of the world. The author has published all the work openly. Let us wait and see whether others can reproduce it and/or find weak points in it.

5️⃣ We already have a date for the Apple Vision Pro: February 2. I cannot wait to see the first reviews and the first apps. There is surely some programmer right now finishing what will turn out to be the equivalent of the beer we all drank on the first iPhones.

Om Malik joins the many people who believe that the main use of this device will be watching films and television. Apple seems to agree with him in the teaser made of clips from famous films in which people put on a headset.

Film, television, Apple TV+, and Vision Pro. It is a good ecosystem and a good use case for reaching general users, outside the niche of videogames and extended reality.

Even so, I would also like to see progress in the field that Apple itself has chosen as a name: spatial computing. Apple is going to redefine and popularize that term, which until now has had a very specialized use. What I hope is that people will begin to implement the idea that Victor Bret has been researching for many years in his Dynamicland project: computational objects situated in space, manipulable, and shared by several people.

Now that the Vision Pro has already been presented, the other two things I am waiting for at the beginning of 2024 are Gemini Ultra and the orbital flight of Starship. There are already 15 fewer days to wait for them.

👷♂️ My fifteen days

🧑💻 One project I want to devote time to in 2024 is building myself a personal website (http://domingogallardo.site). I do not yet know very clearly what to put on it, but I do know a few technical requirements. I want it to be an excuse to finally learn some JavaScript, write it in HTML, with a bit of CSS, and add an RSS feed that reports new posts.

We will see how far I get. During these fifteen days I have set up the infrastructure with Git to move files from my computer to the server, and a basic Nginx server.

📺 We watched the excellent British series Blue Lights. It is a return to the traditional street-level police dramas, the Hill Street Blues of my adolescence, set in present-day Belfast. Highly recommended.

Just as recommendable is the film The Holdovers, an endearing story set in 1970 Boston, with great performances by Paul Giamatti and the young debutant Dominic Sessa.

📖 And as for reading, I have just finished a couple more Lovecraft stories, from the second Valdemar volume: "The Colour Out of Space" and "The Dunwich Horror". More than a year ago I finished the first volume with his early stories, and now I am already deep into the heart of the matter, with strange beings from other dimensions and forbidden books in which incantations are recited that will destroy humanity.

The first story is told from the point of view of a civil engineer who analyzes the effects of the fall of a strange meteorite. It is a very curious example of Lovecraft's scientific knowledge, and it has that old-fashioned Jules Verne air. A few years ago Nicolas Cage starred in a film version that I liked quite a lot, Color out of Space.

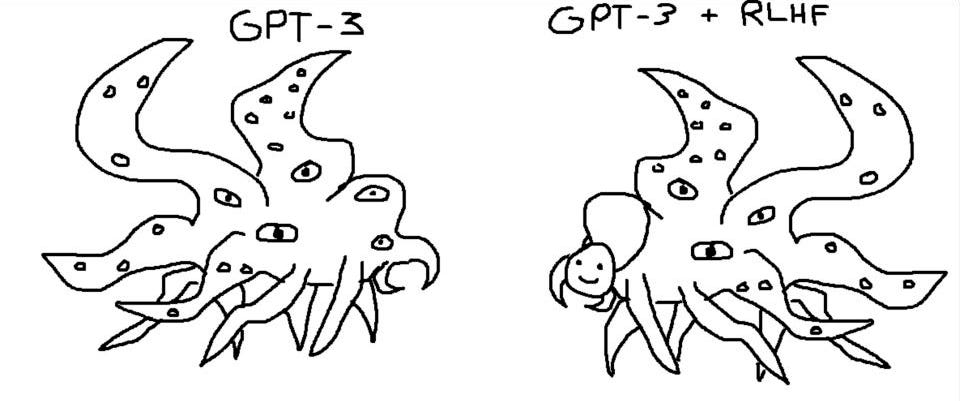

The second, "The Dunwich Horror," goes straight into the themes that have made Lovecraft most famous: the Necronomicon, by the mad Arab Abdul Alhazred, and monstrous beings from other dimensions such as Yog-Sothoth. Wonderful. The efforts of the strange Wilbur Whateley to find original versions of the Necronomicon reminded me of the problems Sam Altman is going to have feeding his next language models, GPT-5, with high-quality datasets.

And that is all for this fortnight. See you soon! 👋👋

🔗 Links