March 1 to 15 (#5 of 2024)

👋👋 Hi, I'm Domingo!

In the first fortnight of March, Anthropic launched the first language model that, in my opinion, is comparable to GPT-4, and perhaps even better than it. And SpaceX managed to put the gigantic Starship into orbit, the rocket that will reduce the cost of sending satellites into space by orders of magnitude and that will one day carry astronauts back to the Moon.

The footage of Starship reentering the atmosphere is astonishing. You can see the high-temperature plasma produced by friction. Shortly afterward the vehicle lost control and exploded.

Thank you very much for reading me!

🗞 News

1️⃣ Let us begin with Starship's test flight from two days ago, March 14. In this third test, SpaceX successfully managed to put Starship into orbit.

Starship is SpaceX's next-generation reusable launch vehicle, designed to carry humans and payloads to Earth orbit, the Moon, and Mars. It promises to revolutionize access to space, reducing the cost per kilogram to low Earth orbit from around $3,000 to something like $100 or even $10. Starship can carry a payload of 100 to 150 tons, multiplying by more than five the payload of the Falcon 9, the rocket SpaceX currently uses.

Starship launch.

There are still several tests ahead in which SpaceX must achieve milestones that it has not yet reached:

-

Recover the Super Heavy booster that lifts Starship, bringing it back to land as we are already used to seeing with Falcon 9.

-

Ignite Starship's Raptor engines while in orbit.

-

Reenter and recover Starship itself. In this test it did not complete full reentry, it exploded as it was beginning to enter the atmosphere.

A good summary of the test is, as always, Daniel Marín's article. For now, three more test flights are planned for this year, although Elon Musk speaks of as many as six new launches. I will keep reporting the results here.

2️⃣ A new Lex Fridman interview with Yann LeCun, Meta's chief AI scientist. LeCun is one of the pioneering and most recognized scientists in the field of deep learning and neural networks. From his position at Meta, he has enormous influence over the future evolution of the LLM industry, above all because of his stance in favor of open models, such as the LLaMA family, Large Language Model Meta AI.

LeCun argues that open access to LLMs allows greater collaboration, experimentation, transparency, and safety. It also makes it possible to adapt them to different sensitivities and cultures, allowing for a diversity and richness of models. According to him, this is the only way to combat the inevitable biases associated with proprietary models created by a handful of powerful companies.

The interview is extremely interesting and starts strong, with very technical answers mentioning approaches alternative to autoregressive LLMs. According to LeCun, current models are not enough to achieve human-like intelligence; new approaches are needed, such as the JEPA architecture, Joint-Embedding Predictive Architecture. After that, the interview turns toward more general issues related to the future social impact of AI and open models.

Some excerpts.

On intelligent assistants:

AI will basically amplify human intelligence. It is as if each of us had a team of intelligent AI assistants. They could be smarter than us. They will do what we ask of them, perhaps carrying out a task in ways much better than we could ourselves, because they would be smarter than we are. And so it is as if all of us were the boss of a team of super-intelligent virtual people. Therefore, we should not feel threatened by this any more than we should feel threatened by being the manager of a group of people, some of whom are smarter than we are. I certainly have a lot of experience with that, of having people working with me who are smarter than I am.

On AI as something similar to the invention of the printing press:

AI is going to make humanity smarter. An equivalent event in human history to what could be provided by the generalization of AI assistants is the invention of the printing press. It made everyone smarter, because people could have access to books. Books were much cheaper than they had been before, and so many more people had an incentive to learn to read, which was not the case before. And people became smarter. This paved the way for the Enlightenment. There would not have been an Enlightenment without the printing press. It enabled philosophy, rationalism, the retreat from religious doctrine, democracy, and science.

On AGI:

General AI, AGI, is not going to be an event. The idea, in some way popularized by science fiction and Hollywood, that someone is going to discover the secret of AGI and then switch on a machine and suddenly we will have AGI, that is simply not going to happen. It is not going to be an event. It is going to be gradual progress. Are we going to have systems that can learn from video how the world works and learn good representations? Yes. Before we bring them to the scale and performance we observe in humans, it is going to take quite a while. It will not happen in a day. Are we going to have systems that can have a large amount of associated memory so they can remember things? Yes, but again, it is not going to happen tomorrow. Some basic techniques still need to be developed. We have many of them, but making all of this work together as a complete system is another story.

On AI doomers:

AI doomers imagine all kinds of catastrophic scenarios about how AI could escape or take control and basically kill us all, and that is based on a bunch of assumptions that are mostly false. So the first assumption is that the emergence of superintelligence is going to be an event, that at some point we are going to discover the secret and switch on a machine that is superintelligent, and because we have never done that before it will take over the world and kill us all. That is false. It is not going to be an event. We are going to have systems that have all the characteristics of human-level intelligence but whose level of intelligence might be like that of a cat, or a parrot perhaps, or something like that. Then we are going to work on making those things smarter. And as we make them smarter, we are also going to put some safety barriers in place and learn how to set those barriers so that they behave properly.

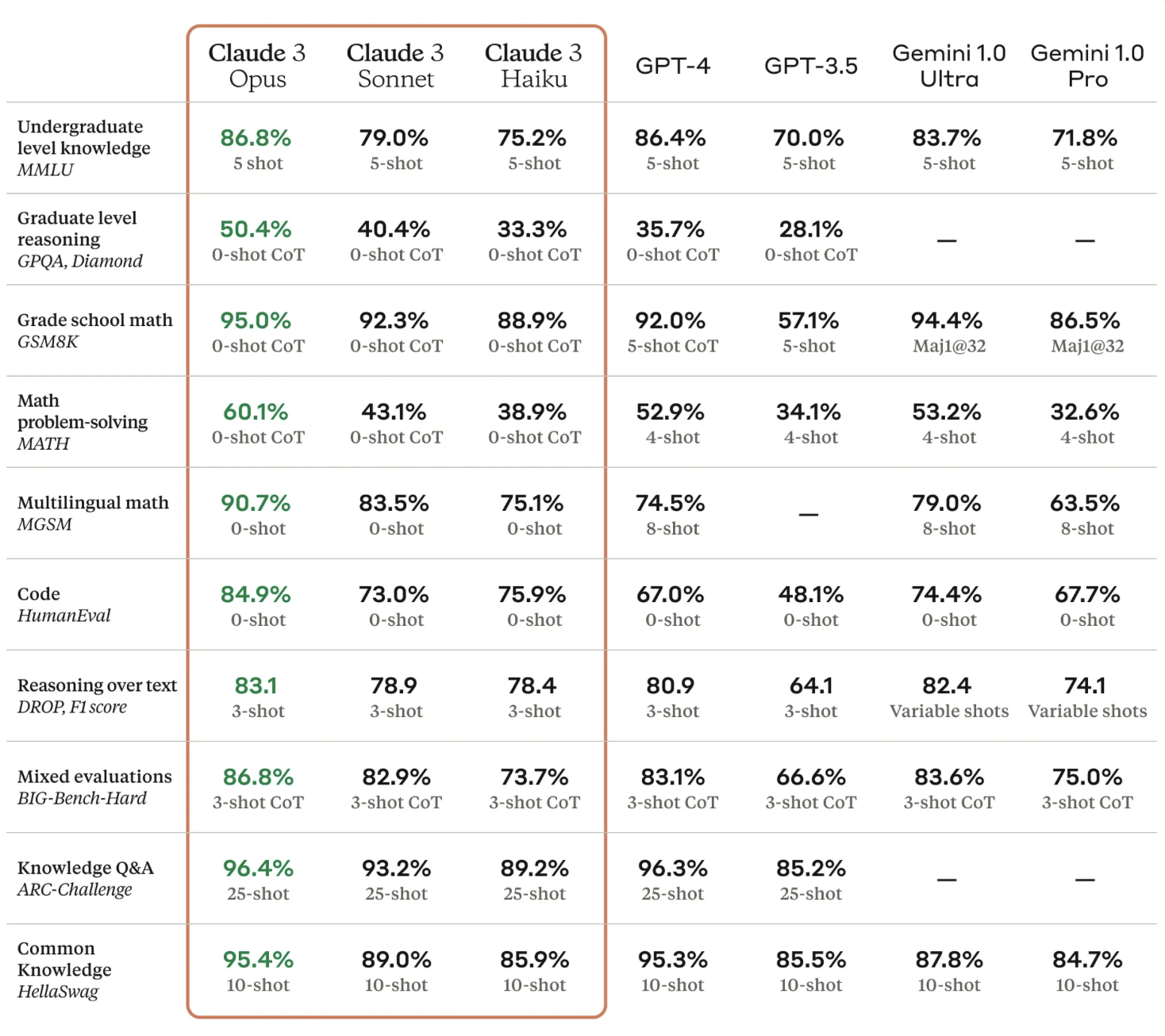

3️⃣ Anthropic has presented a new Claude 3 family of models. In its announcement it explains their characteristics and their curious names: Haiku, Sonnet, and Opus. Opus is the most powerful.

Opus can be tried in the console Anthropic provides for interacting with its API. Unlike what happened with Gemini, which disappointed me enormously, this seems to me a model that competes very well with GPT-4. It even feels closer and more "human" than OpenAI's model, which increasingly seems more rigid and formal, surely because of all the adjustments made to it in order to avoid criticism and bias.

In the table Anthropic presents, comparing these models with the existing ones, Claude 3 Opus surpasses GPT-4 on some tests. And the smallest model, Haiku, surpasses GPT-3.5. A major advance.

I have tested the models with a very simple challenge in which they have to predict the result of actions performed on a set of shapes. The result confirms what Anthropic says about Opus: the model is indeed comparable to GPT-4. I explain the experiment later on, in the "My fifteen days" section.

4️⃣ I like Anthropic's fairly open style regarding the prompts they use for Claude 3. For example, Amanda Askell, one of Anthropic's engineers, has shared on X the system prompt that they include at the beginning of all interactions. It has a very high level of abstraction, with phrases such as:

You should give concise answers to very simple questions, but provide detailed answers to more complex and open-ended questions.

Or this one, so that the model always tries to have as objective a point of view as possible without falling into the trap of trying to please both sides:

If asked about a controversial topic, you should try to provide careful reflections and objective information without minimizing harmful content or implying that there are reasonable perspectives on both sides.

In another X thread, Anthropic prompting engineer Alex Albert asks Opus to make a self-portrait and repeatedly tells it to make it more sophisticated, with prompts such as:

"This is okay! But I want you to try to make it even better."

Or:

"Wow, you're doing great! But I know you're capable of much more, try to make it better this time."

In this way he gets Opus to go from the self-portrait shown on the left to the one on the right, an animation of a sphere made of points.

Anthropic has very interesting resources on how to build prompts:

-

Page on prompt engineering with techniques and examples.

-

Prompt library, with examples ranging from generating SQL queries to creating poems, character-driven stories, or cooking recipes.

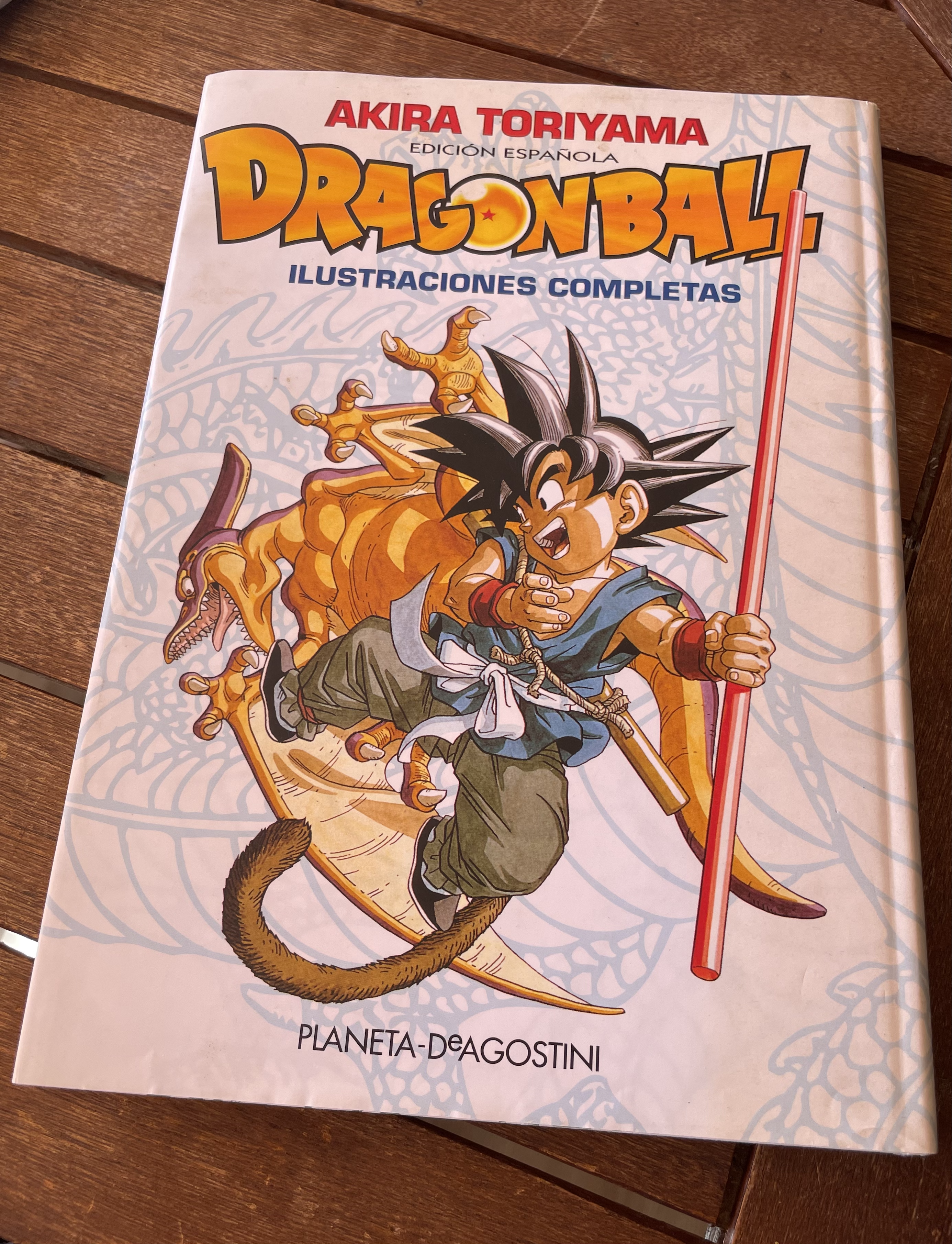

5️⃣ Remembering Akira Toriyama, who died on March 8 at the age of 68, I posted on X a couple of images from his Dragon Ball illustration book, already out of print.

The first image shows a self-portrait and one of his comments, which reveals how demanding he was with himself. Too demanding. Fortunately, in the interview included in the book, the interviewer noted that Akira laughed while talking about something similar. And the second image is a wonderful gallery of some of the characters who already appeared at the beginning of Dragon Ball.

Kiko Llaneras posted on X a wonderful thread about that time when we followed Dragon Ball on regional TV. I followed it on TV3, which could be picked up in Alicante thanks to the antennas installed by Acció Cultural. At that time I was studying Computer Science in Valencia, and back home they would record the series for me so I could watch it on the weekends when I returned to Alicante. Kiko explains very well in the thread the longing we had to find more material and more information about those cartoons that had us hooked, there was no web then, no Google. We had to make do with photocopied fanzines that we bought in the little store Ateneo had at the beginning.

Much later I bought the complete Dragon Ball collection of volumes. They are worn out from how many times we have read them in the family.

Akira Toriyama was a genius, and Dragon Ball is genius too. The variety of characters, the humor, the way he draws action, the originality of his panels, it is all incredible. And on top of that it is a story that is a great soap opera: the characters evolve, have children, die, and come back to life. It is a fun comic overflowing with imagination.

Here are a few examples of panels.

👷♂️ My fifteen days

👨💻 Tinkering

As I mentioned earlier, one of the things I have been doing this fortnight is trying to assess in some objective way the capabilities of different LLMs.

One criticism often made of them is that they lack the ability to plan, and that they do not have a very elaborate model of the physical world. I thought of testing this with a challenge I remember having tried the first time GPT-3.5 appeared: making it predict the result of moving shapes around in a simplified blocks world. Back then I verified that GPT did not know how to solve these kinds of problems. What happens with more advanced models such as GPT-4 or Opus?

More specifically, the prompt I proposed was the following:

Solve the following problem:\n\nImagine a column with the following elements from top to bottom: circle, square, triangle.\n\nNow imagine that the action "move top to the right" takes the element at the top of one column, removing it, and places it to the right, on top of the element in the column to the right. If the column on the right has no elements, it is placed at the bottom.\n\nDescribe the final result of the following actions:\n\n1. Move top from column 1 to the right.\n2. Move top from column 1 to the right.\n

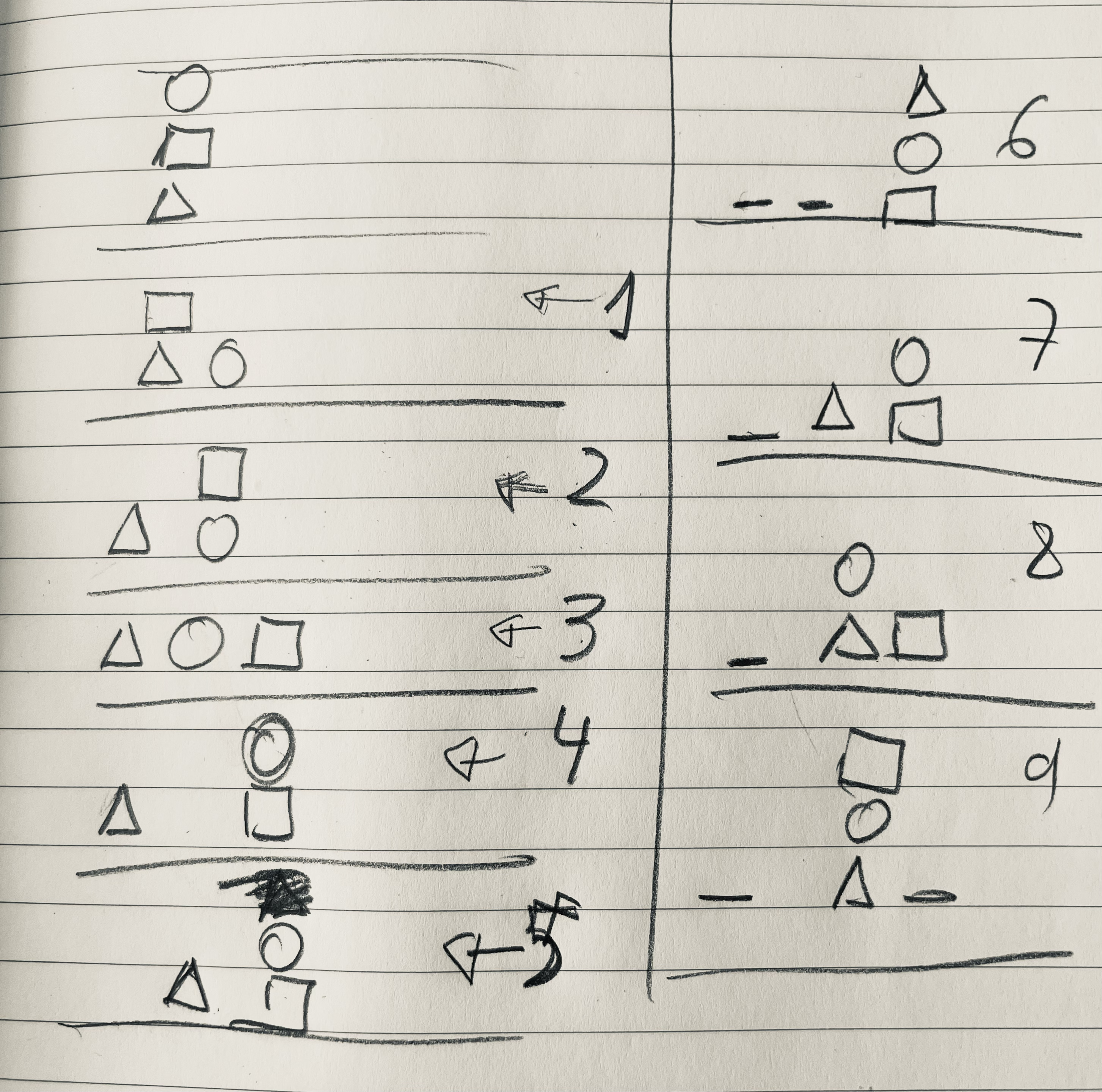

The problem can be made more complicated by adding actions and by including the action of moving to the left. For example, the following figure shows the result after 9 actions.

Let us remember that LLMs are models with no kind of intermediate memory in which to store partial results, so they cannot reflect on them or make plans using them. Imagine trying to solve this problem in your head, without being able to draw the intermediate states on paper.

In fact, in their attempts to solve the problem, the models list partial results within the conversation itself as a way of mitigating the lack of memory. It is something like the prompting technique where the model is told to think "step by step".

Now for the results. I tried the problem in Claude's console, GPT's console, and the Gemini Advanced web interface. I kept testing the problem, beginning with one action and then adding more actions one by one in each test, following the drawing above. The results were as follows:

With only one action

When asked to solve the problem using only the first action, all the models solve it correctly.

With the first two actions

When we complicate the problem by adding a second action, it already becomes too difficult for:

-

GPT-3.5

-

Gemini Advanced, Ultra

-

Claude 2.1

It is surprising that Google's Gemini Ultra, the model being promoted as being as powerful as GPT-4, cannot solve it either. Something is going on with Google's model.

The models that do solve it correctly are:

-

Claude 3 Sonnet

-

GPT-4

-

Claude 3 Opus

Sonnet, despite being a model comparable in size to GPT-3.5, solves it correctly, just like the most powerful models.

With a third action

If we add action 3:

3. Move top from column 2 to the right

Sonnet stops doing it correctly, and the only models left that solve the test correctly are:

-

GPT-4

-

Claude 3 Opus

When do they stop solving it correctly?

-

GPT-4: With 6 actions it always gets it right. With 7 actions it sometimes gets the result right and sometimes not. With 8 actions it always gets it wrong.

-

Claude 3 Opus: It does well with 4 actions, but with 5 it no longer does.

This confirms the feeling that Opus and GPT-4 are the most powerful models currently available.

We will test again when OpenAI releases GPT-4.5 or GPT-5.

📖 A book

I finished The Dispossessed by Ursula K. Le Guin. 5 stars out of 5 for a huge 50-year-old book that explores the tension between personal freedom and social justice. It is one of the few books to have won all three of the science fiction world's major prizes at once: the Hugo, the Nebula, and the Locus.

And deservedly so. I liked everything about it: the plot, the character of Shevek and his struggle to develop the physical theory of simultaneity, and to achieve a better society, the relationship between Shevek and Takver, the way language is used on Urras to establish social values, and the atmosphere and detail with which the two societies of Urras and Anarres are explained. The events and discoveries at the end are also wonderful, though I will not describe them in order to avoid spoilers.

A great book that could become a great television miniseries. Let us see whether some production company dares to take it on.

📺 A series and a film

Of the series we started watching this fortnight, I want to highlight two: Expats, on HBO, and Shogun, on Disney.

In Expats, director Lulu Wang tells a story that brings us close to characters from different social classes in Hong Kong during the Umbrella Revolution of 2014. Excellent actresses such as Nicole Kidman, the young Ji-young Yoo, and the endearing Ruby Ruiz, who plays the nanny of Kidman's children.

And as for Shogun, we have only seen the first episode, but that is enough to see the level of this historical superproduction.

And as for films, I watched, twice, the second part of Dune by Villeneuve. It is not to be missed, a true visual spectacle. Five stars out of five.

Until the next fortnight, see you soon! 👋👋